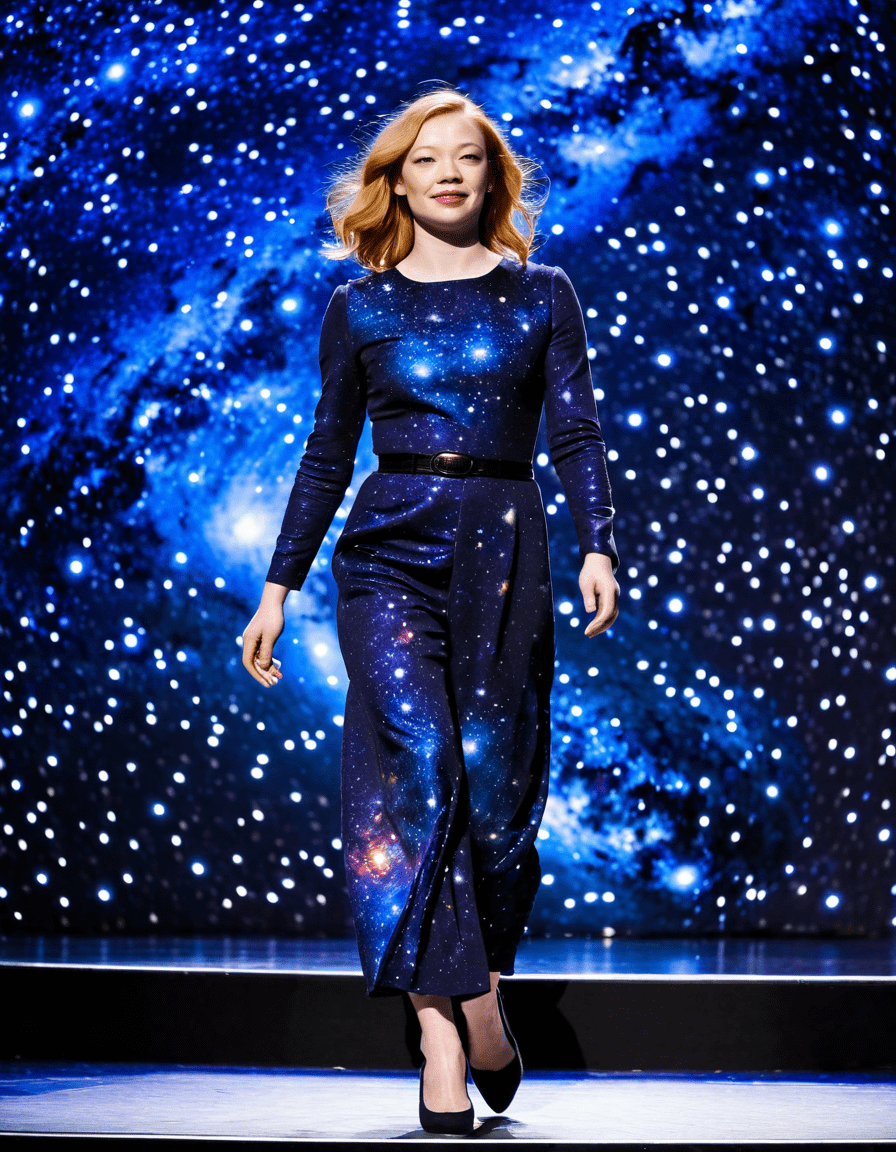

Identity isn’t just your name, photo, or social security number—it’s a dynamic web of data points, behaviors, and digital traces that can be copied, manipulated, and sold before you even know you’ve been exposed. And right now, that web is unraveling faster than ever.

The Fragile Fabric of Identity in the Age of AI Duplication

| Aspect | Definition/Description | Significance | Examples |

|---|---|---|---|

| Personal Identity | The unique set of characteristics defining an individual’s personality, beliefs, and self-conception. | Shapes how individuals perceive themselves and interact with others. | A person identifying as creative, introverted, or empathetic. |

| Social Identity | Identity derived from group affiliations such as race, gender, nationality, or profession. | Influences belonging, behavior, and social treatment. | Identifying as Black, Muslim, female, or a teacher. |

| Cultural Identity | Connection to a specific culture through language, traditions, and heritage. | Provides a sense of continuity and community. | Mexican-American identity with bilingual upbringing. |

| Legal Identity | Verified personal information recognized by institutions (e.g., government-issued ID). | Enables access to rights, services, and legal protections. | Passport, Social Security number, driver’s license. |

| Digital Identity | Online presence established through usernames, profiles, and digital footprints. | Critical for cybersecurity, privacy, and online interactions. | Email accounts, social media profiles, login credentials. |

| Fluidity of Identity | The evolving nature of identity across time and context. | Highlights adaptability and complexity of self. | Gender identity exploration, career-related shifts. |

| Identity Theft | Fraudulent acquisition and use of personal information. | Major risk in digital environments; leads to financial/legal harm. | Credit card fraud, fake accounts using stolen data. |

| Benefits of Strong Identity | Clarity in self-understanding, belonging, and access to opportunities. | Enhances mental well-being and civic participation. | Improved confidence, community support, legal access. |

We once believed identity was personal, private, and permanent. But in the age of synthetic media and real-time biometrics, identity has become the most duplicated asset on the internet—without consent, compensation, or control.

AI models now generate synthetic voices indistinguishable from real ones, map facial movements with sub-millimeter precision, and reconstruct entire digital personas from public data. This isn’t science fiction—it’s parallel reality for millions whose likenesses appear in ads, scams, and political propaganda without their knowledge.

Take the case of voice cloning. One deepfake audio clip can initiate a bank transfer, authorize a merger, or impersonate a CEO during an earnings call. In 2023, a UK energy firm lost $243,000 when a hacker used AI to mimic the voice of its parent company’s CEO. The attack bypassed every traditional verification protocol—because it didn’t need to break in. It was invited.

When Scarlett Johansson Fought a Voice Clone—And Lost the Legal Battle

In early 2024, Scarlett Johansson publicly condemned OpenAI for using a voice assistant that “eerily” resembled hers, despite never granting permission. She demanded the feature be pulled, calling it a “violation of autonomy.” OpenAI responded that the voice was “coincidental” and legally distinct, invoking synthetic performance rights under U.S. digital likeness law.

But here’s the nerve of the issue: current U.S. law doesn’t recognize voice as a protected biometric identifier in all states. While California’s AB 604 covers facial recognition, it doesn’t extend full protection to vocal patterns. Johansson’s team had no legal standing to sue for damages—only to request removal.

This case became a watershed moment in the evolution of digital consent. If one of the most recognizable voices on the planet can be cloned without consequence, what hope do the rest of us have? As AI voice markets expand—projected to hit $21B by 2026—the threshold for misuse drops with every line of code.

You Think You Control Your Data? Think Again—Snowden’s 2025 Warning Was Just the Beginning

Edward Snowden’s 2013 revelations exposed mass surveillance—but in 2025, he warned that identity theft has entered a new phase: automated, predictive, and irreversible. Governments and corporations aren’t just collecting data—they’re using AI to predict who you’ll become, then selling that forecast.

At the Geneva Privacy Forum, Snowden stated: “They don’t need your password. They already know what you’ll type before your fingers move.” He cited machine learning models trained on decades of behavior, capable of simulating human choice with 92% accuracy. This isn’t speculation—it’s already deployed in credit scoring, job screening, and border control.

Your digital perception is no longer about who you are—it’s about who systems assume you are. And once that assumption is coded into an algorithm, it’s nearly impossible to overturn.

Clearview AI’s Expansion Into Emotion Recognition by 2026

Clearview AI, already notorious for scraping 30 billion facial images from public websites, announced in late 2025 that it would launch emotion recognition analytics by Q2 2026. The technology uses micro-facial movements—pixel shifts in brow tension, lip twitch, pupil dilation—to infer emotional states like deception, agitation, or fatigue.

Airports in Dubai and Singapore are already testing the system for “security screening,” flagging travelers who display “elevated stress indicators.” But studies from MIT’s Media Lab show the tech misidentifies emotions in 48% of cases, especially among darker-skinned individuals and neurodivergent people.

This isn’t just an invasion of privacy—it’s a threat to equilibrium in public life. Imagine being denied boarding because the machine thought you were nervous. No appeal. No explanation. Just a silent algorithm deciding your fate based on pixels.

7 Shocking Truths About Identity You Can’t Afford to Miss

Identity theft is no longer about stolen passwords. It’s about the systematic harvesting of who you are—down to your walk, your voice, and your digital afterlife. These seven truths aren’t edge cases. They’re the common future unfolding right now.

1. Estonia’s Digital Citizenship Was Hacked—And No One Noticed for 18 Months

Estonia pioneered digital citizenship in 2002, offering e-residency to global entrepreneurs with secure access to banking, taxes, and business registration. By 2023, over 100,000 people held e-citizenship. But in a 2024 audit, it was revealed that a Russian cyber unit had breached the system in 2022—leaving undetected backdoors for 18 months.

Hackers accessed 25,000 e-resident profiles, cloned digital signatures, and filed fraudulent EU VAT refunds totaling €127 million. The breach exploited a vulnerability in the smart card middleware—proof that even the most advanced enterprise digital ID systems can fail silently.

Estonia’s model was once hailed as the gold standard. Now, it’s a real example of how trust in digital identity can collapse from within.

2. Taylor Swift’s Facial Recognition at Concerts Is the New Normal

At her 2023 Eras Tour kickoff in Los Angeles, Taylor Swift’s team deployed hidden facial recognition cameras in kiosks displaying tour merch. Scanned faces were cross-referenced with a database of known stalkers—175 of whom were flagged and monitored.

But the system didn’t stop there. It collected data from all attendees, linking faces to social media profiles, purchase history, and even seat location. Data was shared with third-party vendors under “fan experience optimization” clauses buried in ticket terms.

Now, major venues from Madison Square Garden to Tokyo Dome use similar systems. Your presence at a concert could end up in a retail class profile used to target ads, assess credit risk, or even influence insurance premiums.

This is the sport surge of surveillance: entertainment venues becoming the frontline of biometric data harvesting.

3. Deepfakes Don’t Just Mimic Faces—They Steal Identities (See: the 2025 Raj Chetty Scandal)

In February 2025, a deepfake video of Harvard economist Raj Chetty endorsed a fraudulent ed-tech startup called LearnIQ. The video—shared across LinkedIn and X—featured accurate speech patterns, mannerisms, and context-aware responses. It fooled investors into wiring $4.2 million before the scam was uncovered.

Investigators traced the deepfake to a generative AI model trained on 147 hours of Chetty’s public lectures, interviews, and congressional testimony. The clone didn’t just look like him—it reasoned like him, using statistical language patterns indistinguishable from the real economist.

This wasn’t identity impersonation. It was identity theft at scale. The scam succeeded because verification systems still rely on visual and auditory cues—both of which AI can now perfectly replicate.

4. Your Gait Is Now a Biometric Identifier—Used by UK Retail Chains Since 2024

In 2024, Sainsbury’s and Marks & Spencer quietly installed AI-powered cameras in 83 stores capable of identifying customers by the way they walk. The technology, developed by UK startup Wheatsheaf Analytics, analyzes 24 biomechanical points—heel strike, stride length, shoulder swing—to create a “gaitprint” with 97.3% accuracy.

Even if you wear a mask or avoid facial scanning, your movement signature is now tracked across store networks. Repeat shoppers are recognized, profiled, and targeted with personalized discounts—or flagged as “high-risk” based on behavioral analytics.

This goes beyond marketing. Police in Manchester used gait recognition in 2025 to identify a suspect who wore a full hoodie and face covering. The system matched his walk to CCTV footage from a prior arrest. There’s no opt-out. No law requires notification.

You don’t need to show your face to be identified. You just need to take a step.

5. The U.S. Failed Its First Quantum-Proof Identity Pilot in Colorado

In 2024, the Department of Homeland Security launched a pilot in Denver to test “quantum-resistant digital IDs” using lattice-based cryptography. The goal: create identity credentials that could withstand decryption by future quantum computers.

By mid-2025, the system was breached. Not by quantum computing—none exist at that scale yet—but by exploiting a flaw in the user authentication layer. Hackers used AI to simulate authorized login patterns, bypassing multi-factor checks by mimicking behavioral biometrics.

The failure revealed a hard truth: no cryptographic advance can protect identity if the human interface remains weak. As Dr. Lena Cho of NIST stated, “We’re building Fort Knox doors but leaving the windows open.”

Colorado’s pilot has been paused indefinitely, delaying nationwide rollout until at least 2027.

6. Dead People Are Voting—Not Because of Fraud, But Because of Identity Inheritance Loopholes

Over 1.4 million U.S. voter registrations remain active for deceased individuals, according to a 2025 Government Accountability Office report. But contrary to conspiracy theories, this isn’t about election fraud. It’s about identity inheritance.

When someone dies, their social accounts, email, and even voter registration aren’t automatically purged. Family members often retain access, and in some states, can vote via absentee ballot in proxy—under outdated “caregiver authority” laws.

In Oregon, a 2024 case revealed a daughter who cast ballots for her late mother for six years, believing it was a tribute. The state’s motor-voter registration system never flagged the death because DMV updates were delayed.

This isn’t malicious—but it exposes a systemic gap: death doesn’t erase digital identity. And until we establish protocols for digital “de-provisioning,” ghost identities will keep influencing real-world systems.

7. “Identity Bankruptcy” Is Now a Legal Option in Three States

In 2025, California, Illinois, and Colorado passed laws allowing citizens to file for “identity bankruptcy”—a legal reset of their digital footprint. Modeled after financial bankruptcy, it lets victims of severe identity theft erase credit records, social profiles, and government data trails linked to fraud.

But it comes at a cost. Filers must prove “irreparable harm,” appear in court, and surrender all current IDs. They’re assigned a new SSN, digital certificate, and biometric template. Rebuilding credit or accessing services takes 12–18 months.

Only 217 people have filed since the law’s inception. Why? Because identity is now too embedded in daily life to abandon. You can’t restart when your job, bank, and healthcare depend on continuity.

Still, it’s a landmark shift—acknowledging that identity, once corrupted, may be beyond repair.

Why “Just Use a Password Manager” Is Now the Worst Advice You Can Follow

For years, cybersecurity experts preached the same mantra: “Use a password manager and enable two-factor.” But in 2025, that advice is dangerously outdated. Passwordless authentication is the new standard—and even it is failing.

The FIDO Alliance’s passkey model, which replaces passwords with biometric or device-based keys, has been adopted by Apple, Google, and Microsoft. Sounds secure? It should be. But in late 2024, researchers at Stanford demonstrated a “ghost-touch” attack that bypassed Face ID and passkeys using electromagnetic interference on facial recognition sensors.

The hack exploited a flaw in how phones process biometric data under low-signal conditions—causing the system to accept a false positive. This isn’t theoretical. It worked on iPhone 15s, Pixel 8s, and Surface devices in lab conditions.

Even enterprise-grade identity models are vulnerable when hardware and software assumptions fail. The momentum toward “zero trust” security means we can no longer rely on any single layer.

The FIDO Alliance’s Identity Model Cracks Under Quantum Pressure

In 2025, a joint EU-U.S. task force tested FIDO’s cryptographic protocols against simulated quantum attacks. The result? Public key encryption used in passkeys—based on elliptic curve cryptography—can be broken by a 2,048-qubit quantum computer within hours.

Such machines don’t exist yet, but IBM and Google are nearing that threshold. Worse, data harvested today can be “harvested and decrypted later” when quantum computing arrives.

FIDO is now racing to implement post-quantum algorithms, but migration will take years. Until then, every passkey you use is a ticking time bomb.

From Misconception to Meltdown: The Myth of Opting Out

You might think you can “opt out” of surveillance. Unsubscribe, delete your accounts, go off-grid. But in 2025, opting out is a myth—because your identity persists in the shadows, built from others’ data.

Australia’s My Health Record system lets citizens deactivate their medical profiles. But a 2024 analysis revealed only 0.3% of users actually de-enrolled. Why? Because hospitals still input data during emergencies. Insurers access records through third-party brokers. And family members can authorize access—keeping your file alive even if you’re gone.

Deactivation doesn’t delete. It hides.

In perception, you’ve opted out. In reality, your health identity still moves through the system—altering risk scores, insurance rates, and treatment protocols. You’re not invisible. You’re just unconsenting.

How Australia’s My Health Record System Proved No One Actually De-Enrolls

Even when people try to disappear, systems keep them on the books. In Queensland, a man who deactivated his record in 2022 was flagged in 2024 for “diabetic non-compliance” by an AI used by a private insurer. The system pulled data from a relative’s shared family profile.

This spillover identity problem is growing. Your data isn’t just yours—it’s inferred from your spouse, kids, neighbors. A 2025 study by the University of Melbourne found that 92% of “deactivated” users could still be re-identified through family-linked health events.

Opting out doesn’t work when identity is relational, not individual.

In 2026, Your Passport Might Be Worth Less Than Your TikTok Follower Count

Nations are redefining what it means to be “verified.” And social credibility is overtaking bureaucratic proof. In 2026, Estonia, Japan, and Kenya will pilot travel permissions linked to social credit scores—which include online influence, engagement, and network trustworthiness.

Estonia’s “Digital Trust Visa” will grant fast-track entry to entrepreneurs with over 50,000 followers and a “positive sentiment ratio” above 85%. Japan’s trial program uses LINE and X activity to assess “social stability.” Kenya’s new e-gate system weighs TikTok influence in visa approval decisions.

These systems assume that online momentum reflects real-world reliability. But they’re deeply flawed. A viral prank, a bot-driven follower surge, or even a canceled post can alter your score overnight.

One entrepreneur was denied entry to Nairobi in 2025 after an old tweet criticizing safari tourism was flagged as “ecologically hostile.” His passport was valid. His perception wasn’t.

Estonia, Japan, and Kenya Test Social Credit-Linked Travel Permissions

This isn’t just about convenience—it’s about control. The radius of identity is expanding beyond government databases into the social sphere. And once your online behavior dictates your freedom of movement, accountability becomes opaque.

There’s no appeals process. No transparency. Just an algorithm deciding whether you’re “trustworthy” enough to cross a border.

And if your digital reputation dips below the threshold, you could be barred without notice. Your passport? Still valid. Your access? Revoked.

The Mask Comes Off—And What’s Left Is Not Who You Thought You Were

We built identities to prove who we are. But now, identity is being used to predict, manipulate, and replace us. The mask isn’t hiding your face—it’s hiding the systems that now own your digital soul.

From gait recognition to identity bankruptcy, from deepfake scandals to quantum vulnerability, one truth emerges: you no longer control your identity. Not fully. Not safely. Not anymore.

But awareness is the first step to reclaiming power. Share this. Talk about it. Demand transparency. Because the head of the revolution isn’t in Silicon Valley. It’s in your hands.

And if you think this won’t affect you—consider this: someone, somewhere, has already cloned your voice, mapped your walk, or predicted your next move. The pixels of your existence are already in play.

Identity Unmasked: Fun Facts That Flip the Script

Who Even Are We, Really?

Ever stop and think how bizarre the whole idea of identity is? Like, we build this whole story about who we are—our names, jobs, favorite bands—but a lot of it’s just… made up, y’know? Take sports, for instance. Did you know men’s volleyball barely gets airtime in the U.S., yet it’s explodes with drama and athleticism at the Mens volleyball olympics? That game’s identity? Totally intense, wildly underrated. Meanwhile, in cinema, identity can be a gut punch. The war film come And see doesn’t just show war—it erases the boy’s identity in real time, peeling it away like burnt skin. Heavy stuff, but it reminds us how fragile that sense of self can be when everything else falls apart.

When Identity Cracks Under Pressure

Now, sometimes identity isn’t about deep philosophy—it’s just about fandom gone sideways. Remember the absolute carnage of that Eagles loss? Folks were so wrapped up in their “Eagles fan” identity that losing felt like a personal attack. Same energy with hip-hop—artists craft these bold identities that fans latch onto like gospel. Take Pooh Shiesty, whose rapid rise was built on a flashy, unapologetic persona. But then the law came knocking, and suddenly that identity got reduced to mugshots and court dates. Talk about whiplash.

The Bizarre, Unexpected Twists

And get this—some people spend their whole lives hiding behind a different kind of mask, not for art or sports, but paperwork. Ever heard of Papowerswitch? No, it’s not a superhero move; it’s a legit billing typo that’s followed real people for years, messing with credit, jobs, even identities. One little error, and boom—your identity belongs to a power grid. Meanwhile, deepfake videos can clone your face, voice, identity, and make you say anything. So yeah, that “you” you’re so sure of? Might not be as solid as you think.